Let’s be honest : everyone is “doing AI.” Very few are shipping AI safely, repeatedly, and at scale.

Most AI roadmaps fail for a boring reason : they’re wish lists. A menu of exciting use cases (copilots, fraud AI, agentic workflows, predictive maintenance…) plus a vague timeline, plus the unspoken hope that the organization will magically become capable enough to deliver all of it.

When leaders ask, “Which AI use cases should we prioritize?”, they’re often skipping the more important question : What is the next capability constraint we must remove so AI can be delivered safely, repeatedly, and at scale?

That’s what maturity-based prioritization is : Sequencing your roadmap by what your enterprise can actually support, across governance, data, engineering, operating model, and adoption ; not what looks best in a PowerPoint presentation.

- The wrong assumption: “If we pick the best use cases, the roadmap will work”

- Model slice: The 5-Lens Prioritization Stack

- The maturity-based roadmap : what to do first, second, and never (yet)

- What to do first : “Credibility builders” and “constraint removers”

- What to do second: “Repeatable deployments” in bounded domains

- What to do “Never (Yet)”: the roadmap’s most strategic word

- The executive decision: stop funding “more pilots” and fund “more maturity”

- One practical move : run a 2-hour “Maturity + Portfolio Triage” session

The wrong assumption: “If we pick the best use cases, the roadmap will work”

Here’s the trap : organizations rank use cases by ROI as if AI delivery were a vending machine. But ROI rankings ignore the hidden work that determines whether AI can be shipped and sustained :

- Can you get data reliably and legally?

- Can you measure model performance and drift in production?

- Can you explain outcomes when regulators, auditors, customers, or internal stakeholders ask?

- Do teams know who owns decisions when things go wrong?

- Can the workflow absorb the change without breaking?

This is why “best use case” thinking consistently produces lots of pilots, little production.

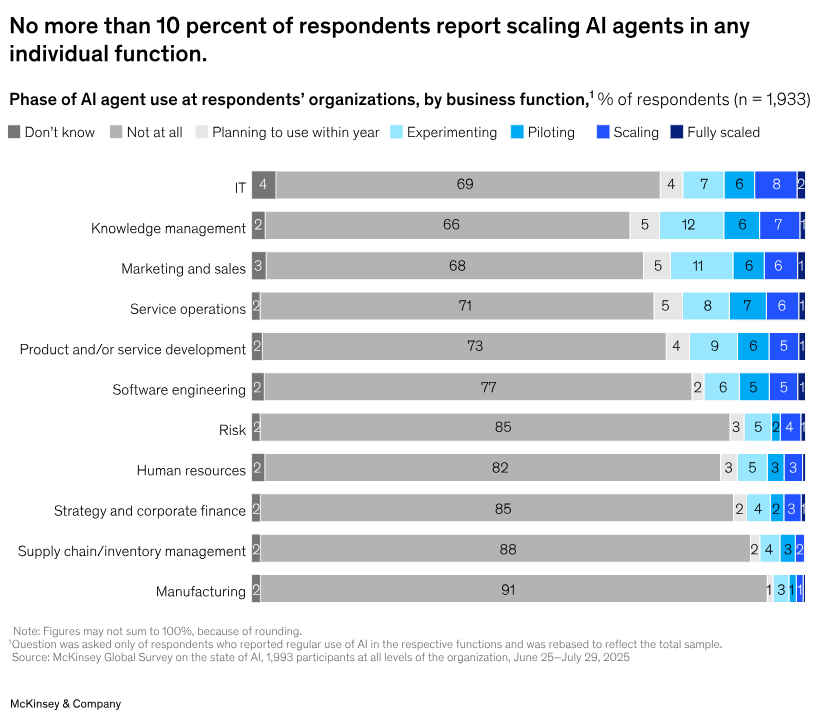

McKinsey’s State of AI : Global Survey 2025 makes the same point in a more empirical way : value capture correlates with management practices across multiple dimensions, not just picking shiny use cases. Those dimensions include strategy, talent, operating model, technology, data, and adoption/scaling.

So the goal of a roadmap isn’t “identify the most valuable use cases.”

The goal is : Build maturity in the right sequence so the valuable use cases become deliverable.

Model slice: The 5-Lens Prioritization Stack

Use this as your roadmap “sorting algorithm.” It keeps prioritization decision-grade without turning this into an implementation manual.

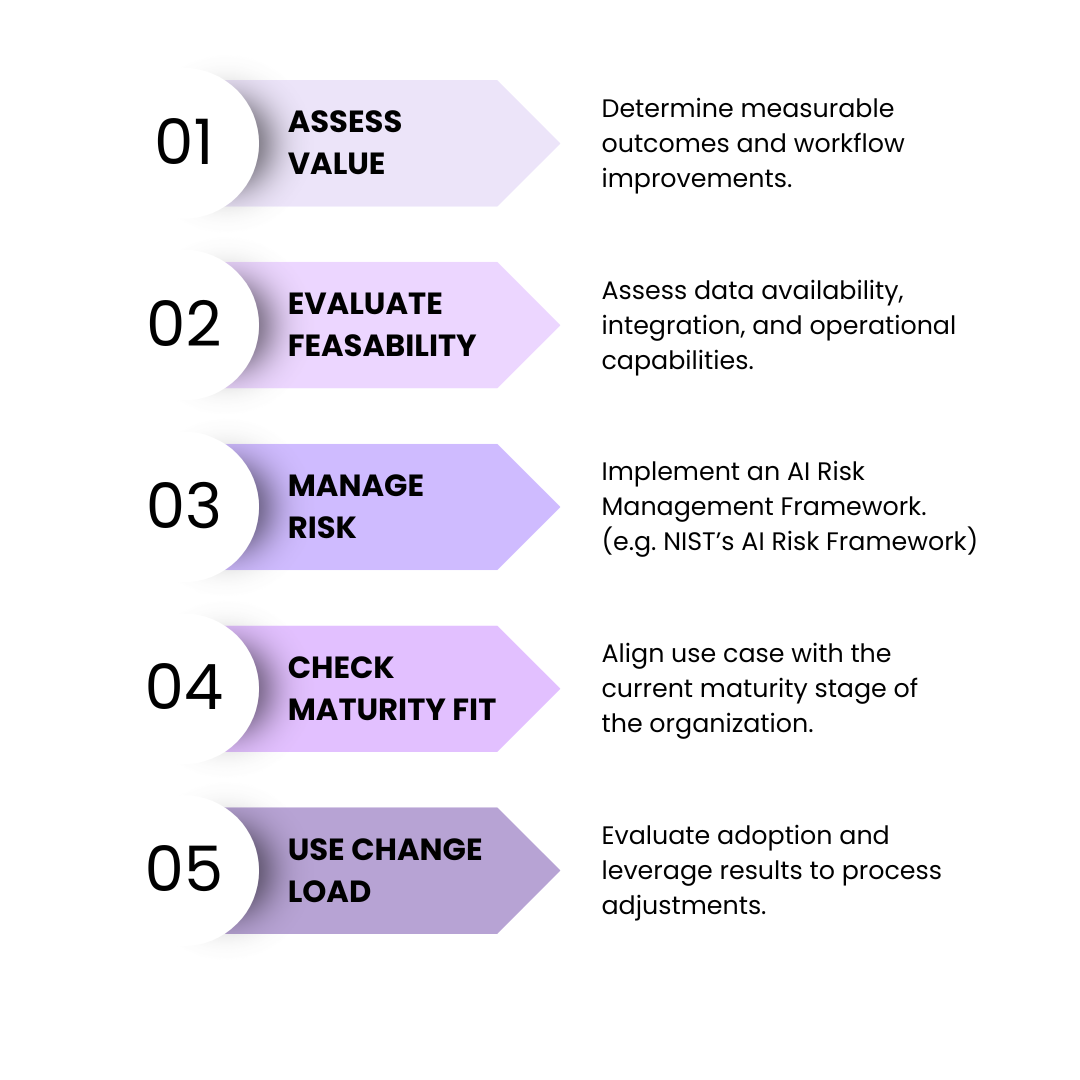

Lens 1 : Value

What measurable outcome improves if we succeed?

Examples: lower cost-to-serve, faster cycle time, higher conversion, better forecast accuracy.

Rule: If you can’t name the workflow or decision that improves, it’s not a use case, it’s a demo.

Lens 2: Feasibility

Can you actually build and run it?

Includes data availability, integration complexity, deployment approach, and monitoring/incident response capability.

Rule: Feasibility isn’t “can we prototype it.” It’s “can we operate it.”

Lens 3: Risk & assurance

Can you defend it when it matters?

NIST’s AI Risk Management Framework frames risk management as lifecycle work organized around Govern, Map, Measure, and Manage, with governance as cross-cutting, not a “later” phase.

Rule: High-impact, high-liability use cases shouldn’t outrun your assurance capability.

Lens 4: Maturity fit (the gate)

Does this use case match your current maturity stage?

Gartner’s maturity model approach emphasizes readiness across core areas like strategy, product, governance, engineering, data, operating model, and culture.

Rule: If maturity fit fails, you don’t “prioritize harder.” You either reduce scope or prioritize the enabling capability first.

Lens 5: Change load

Will this stick in the workflow?

Adoption isn’t a communications plan. It’s the reality that people must trust outputs, managers must adjust expectations, and processes must change without collapsing.

Rule: A low change-load use case can be a strategic maturity builder, even if it isn’t the biggest value on paper.

The maturity-based roadmap : what to do first, second, and never (yet)

Maturity-based prioritization is sequencing logic:

- First : Build credibility + reduce delivery friction

- Second : Prove repeatability + scale in bounded domains

- Never (yet): Avoid high-liability automation until governance, measurement, and operating model are ready

Let’s translate that into practical executive guidance.

What to do first : “Credibility builders” and “constraint removers”

Your first wave should do two things at once.

A) Credibility builders : low-risk, high-visibility, fast learning)

Pick use cases where:

- The workflow is well understood,

- The harm of a mistake is limited,

- People can validate outputs,

- Impact can be measured quickly.

Examples (stage-appropriate in many orgs):

- Internal knowledge assistant for policy/search with boundaries and citations

- Drafting support (emails, proposals, job descriptions, meeting notes), human-in-the-loop

- Customer support agent assist (suggestions, not autonomous actions)

- Document classification and routing with audit logs

Why these matter : they produce signal, build adoption muscle, and force early conversations about privacy, permissions, and workflow design, without betting the company.

B) Constraint removers : Capability investments that unlock the next tier)

This is the part that leaders underfund because it doesn’t look like “AI innovation.” But it’s what turns one-off wins into a scalable program.

Typical constraints that block scaling:

- no shared approach to data access and lineage

- no monitoring/measurement standards

- unclear decision rights for model risk

- no “how we deploy” pattern (even if vendor-hosted)

- unclear policy for sensitive data and third-party tools

If you’re in a regulated or high-risk domain, governance maturity isn’t optional. ISO/IEC 42001 is explicitly about establishing and improving an AI management system to support the responsible development/use of AI systems.

First-wave rule: Every credibility builder should pressure-test at least one constraint remover. That’s how you mature without stalling.

What to do second: “Repeatable deployments” in bounded domains

Once you can deliver and operate a few use cases reliably, the roadmap shifts from “AI projects” to AI as a capability.

Second-wave initiatives tend to have:

- clear data ownership and quality controls

- defined metrics and monitoring expectations

- a stable operating cadence (who reviews what, how often)

- integration into real systems of record

- a consistent rollout pattern (training + workflow updates + adoption metrics)

Examples (often stage-appropriate here):

- Forecasting improvements (demand, inventory, staffing) with controlled decision impact

- Fraud/anomaly detection that triggers investigations (not automatic denial)

- Sales prioritization/lead scoring with human review and bias monitoring

- IT operations copilots that recommend actions with approvals

McKinsey’s 2025 work reinforces that scaling depends on adoption/scaling practices and operating model discipline, not novelty.

Second-wave rule: If you can’t run it through a consistent operating model (ownership, monitoring, incident response, retraining logic, change management), it’s still a pilot, no matter how sophisticated the model is.

What to do “Never (Yet)”: the roadmap’s most strategic word

“Never (yet)” doesn’t mean “never.” It means not until you can govern, measure, and defend the outcome.

These are the use cases you should explicitly deprioritize early, even when the ROI pitch is huge:

1) High-liability automation in regulated decisions

Examples: automated credit/loan decisions without explainability/appeals/monitoring; claims approvals/denials without robust controls; clinical decision support used without appropriate medical governance.

These are not “advanced use cases.” They are risk systems, and NIST AI RMF is a reminder that maturity includes ongoing risk management, not just model building.

2) Autonomous agents acting across systems of record

“Agentic” patterns raise the bar dramatically: permissioning, audit trails, error handling, rollback, and accountability.

If your org can’t consistently manage access, logging, and incident response, autonomy will outpace governance.

3) People-impacting decisions without bias and oversight maturity

Examples: hiring, performance evaluation, workforce management, student assessment.

If it influences livelihoods, reputations, or rights, your maturity gate must be strict.

Never (yet) rule: When the cost of a mistake includes legal exposure, reputational damage, or harm to individuals, you must prove governance and measurement maturity before scaling.

The executive decision: stop funding “more pilots” and fund “more maturity”

This is the decision that separates AI theater from AI value:

Do we optimize for the number of AI initiatives, or for the organization’s ability to deliver AI repeatedly?

The first creates an impressive portfolio slide and a trail of stalled pilots.

The second creates compounding capability.

If you’re seeing lots of pilots and little production impact, treat it as a maturity signal:

- unclear ownership

- slow approvals

- integration delays

- inconsistent tools

- lack of measurement standards

A maturity-based roadmap makes that visible, and forces the right investment sequence.

One practical move : run a 2-hour “Maturity + Portfolio Triage” session

You don’t need a months-long assessment to start. You need enough maturity signal to stop lying to yourself.

In one working session with the right leaders, you can:

- identify the top constraints blocking scale (governance, data, engineering, operating model, adoption),

- gate use cases by maturity fit,

- re-rank the roadmap into First / Second / Never (Yet).

Use the 5-Lens Stack as the structure and keep it brutally honest : If maturity fit fails, either reduce scope, or prioritize the constraint remover first.

That’s how you turn the roadmap from a wish list into a delivery plan.